Neuroscience: The Risks of Reading the Brain

Neuroscience: The Risks of Reading the Brain

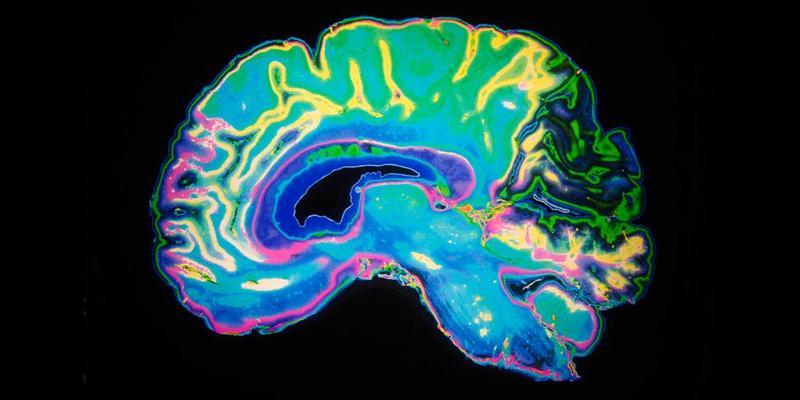

Since its 1992 debut, functional magnetic resonance imaging (fMRI) has revolutionized our ability to view the human brain in action and understand the processes that underlie mental functions such as decision-making. As brain-imaging technologies have grown more powerful, their influence has seeped from the laboratory into the real world.

In , clinical neuropsychologist Barbara Sahakian and neuroscientist Julia Gottwald give a whistle-stop tour of some ways in which neuroimaging has begun to affect our views on human behaviour and society. Their discussion balances a rightful enthusiasm for fMRI with a sober appreciation of its limitations and risks.

After the obligatory introduction to fMRI, which measures blood oxygenation to image neural activity, Sahakian and Gottwald address a question at the heart of neuroimaging: can it read minds?

The answer largely depends on one’s definition of mind-reading. As the authors outline, in recent years fMRI data have been used to decode the contents of thoughts (such as words viewed by a study participant) and mental states (such as a person’s intention to carry out an action), even in sleep.

These methods don’t yet enable researchers to decode the ‘language of thought’, which is what mind-reading connotes for many. But given the growing use of advanced machine-learning methods such as deep neural networks to analyse neuroimaging data, that may just be a matter of time.

The authors rightly highlight the need for a deeper discussion about the ethical use of neuroimaging. Looking at fMRI as a lie detector, they note that its shortcomings (particularly the lack of well-established degrees of accuracy) have so far kept it out of the criminal courts. They outline the brain’s network for making moral judgements and how it may be disrupted in conditions such as psychopathy. They conclude that because not all individuals with these conditions commit criminal acts, neuroimaging will probably be an imperfect predictor of criminal behaviour. The emerging field of “neuromarketing”, also raises concerns. Citing research such as the ‘Coke vs Pepsi’ study, which demonstrates how brain activity reveals preferences for consumer goods, Sahakian and Gottwald call for stronger regulation to prevent misuse of the technology.

They discuss one of the fundamental problems in lay thinking about neuroscience — what I often call folk dualism. This is the idea (crucial in legal applications of neuroimaging) that there is somehow a difference between brain and mind that is relevant to understanding people’s actions. They chide: “Saying ‘My brain made me do it!’ makes just as much sense as saying that J. K. Rowling convinced the author of the Harry Potter series to write seven books about the young wizard.”

One surprise in is its rich coverage of behavioural research. For example, the discussions about racial bias and self-control focus mainly on psychological studies rather than brain imaging. I appreciated this, given that neuroimaging is generally only as solid as the behavioural research that underpins it.

The authors’ discussion of the ongoing challenges in reproducing some psychological effects is commendably forthright. For instance, they offer an in-depth, remarkably fair discussion of “ego depletion”, in which exerting self-control in one domain (such as solving a difficult cognitive problem) is thought to impair one’s ability to exert self-control in another (such as choosing healthy over unhealthy foods). The implication is that self-control is like a muscle that can tire. Many studies and meta-analyses have demonstrated evidence for ego depletion, but a large-scale trial led by psychologists Martin Hagger and Nikos Chatzisarantis failed to replicate the result.

The methodological limitations of fMRI get varying coverage. The authors underline that the technology reveals only correlations. Just because an area is active when one experiences fear, it doesn’t mean that that region is necessarily involved in the experience of fear. Causal necessity can be demonstrated only by manipulating the function of a brain region, either through brain stimulation or the study of brain lesions (such as from a stroke). For instance, many studies show that the ventromedial prefrontal cortex (important in value-based decision-making) is activated when research participants are considering how much they are willing to pay for consumer goods. But recent work has found that some people with lesions to this area show no impairment in such abilities.

I had minor quibbles. Sahakian and Gottwald discuss the problem of “reverse inference” regrettably late in the book. This consists of researchers inferring a psychological state (say, fear) from activation in a specific brain region (such as the amygdala). This is, as the authors outline, problematic because there is rarely such a one-to-one correspondence; most brain regions are activated in many different contexts. However, at several points they use the same kind of reasoning to interpret fMRI results.

The ethical dilemmas discussed in are likely to be just the tip of the iceberg, as developments boost our ability to ‘read minds’. Once neuroimaging enables accurate prediction of future behaviour, will we see dystopias such as that in Steven Spielberg’s 2002 film Minority Report — in which people are arrested for crimes that they have not yet committed? Or will we be able to balance human rights with technological power? The issues raised in this book provide a good grounding for thinking about that particular brave new world.

Russell Poldrack/Nature

Be the first to post a message!